In this context, assistive tools capable of tackling such complexity have the potential to aid users improving their performance and effectiveness, as well as to streamline businesses’ processes and promote entrepreneurial-level competitivity. Nowadays, numerous organisations of different dimensions and business sectors operate in highly challenging and dynamic environments, wherein the supporting information systems (IS) are becoming increasingly complex. It has been verified that the proposed framework yields higher smoothness and shorter idle times, and meets more working styles, compared to the state-of-the-art methods without prediction awareness. We applied our model to the seats assembly experiment for a scale model vehicle and it can obtain a human worker’s intentions, predict a coworker’s future actions, and provide assembly parts correspondingly. This model allows the robot to adapt itself to predicted human actions and enables proactive assistance during collaboration. A state-enhanced convolutional long short-term memory (ConvLSTM)-based framework is formulated for extracting the high-level spatiotemporal features from the shared workspace and predicting the future actions to facilitate the fluent task transition. An embedded learning from demonstration technique enables the robot to understand various task descriptions and customized working preferences. This paper proposes a prediction-based human-robot collaboration model for assembly scenarios. To smooth the collaboration task flow and improve the collaboration efficiency, a better way is to formulate the robot to surmise what kind of assistance a human coworker needs and naturally take the right action at the right time. However, more and more applications from SMEs require robots work alongside their counterpart human workers. Most robots are programmed to carry out specific tasks routinely with minor variations. The experimental results showed that the recognition rate for the speech impaired is about 80%, while the recognition rate for normal speech is 100%. The test was conducted by mentioning the digits 1 through 10.

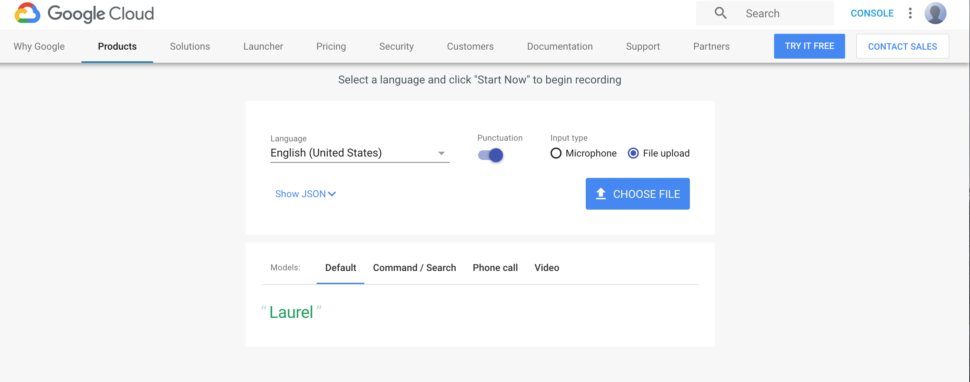

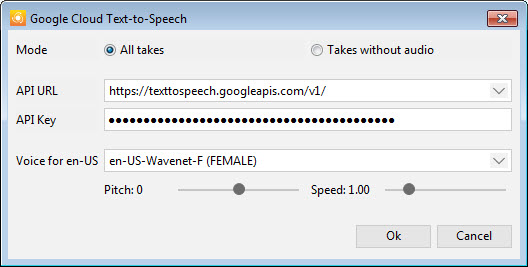

Although research into speech recognition to text has been widely practiced, this research try to develop speech recognition, specially for speech impaired's speech, as well as perform a likelihood calculation to see the factor of tone, pronunciation, and speech speed in speech recognition. The Google Cloud Speech API integrates with Google Cloud Storage for data storage. By using the Google Cloud Speech Application Programming Interface (API), this allows converting audio to text, and it is also user-friendly to use such APIs. In this research, the authors have developed a speech recognition application that can recognise speech of the speech impaired, and can translate into text form with input in the form of sound detected on a smartphone. Therefore, an application is required that can help and facilitate conversations for communication. Those who are speech impaired (tunawicara in the Indonesian language) suffer from abnormalities in their delivery (articulation) of the language as well their voice in normal speech, resulting in difficulty in communicating verbally within their environment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed